SLS is dedicated to Improving Outcomes in Minimally Invasive & Robotic Surgery.

SLS is a multispecialty and multidisciplinary organization with a global reach. Our current and past officers and membership reflect interest and leadership in Laparoscopic and Robotic Surgery and represent the knowledge and opinion leaders (KOLs) and pioneers in robotics and computer assisted surgery, surgical simulation, laparoscopy, endoscopy, and minimally invasive surgery.

-

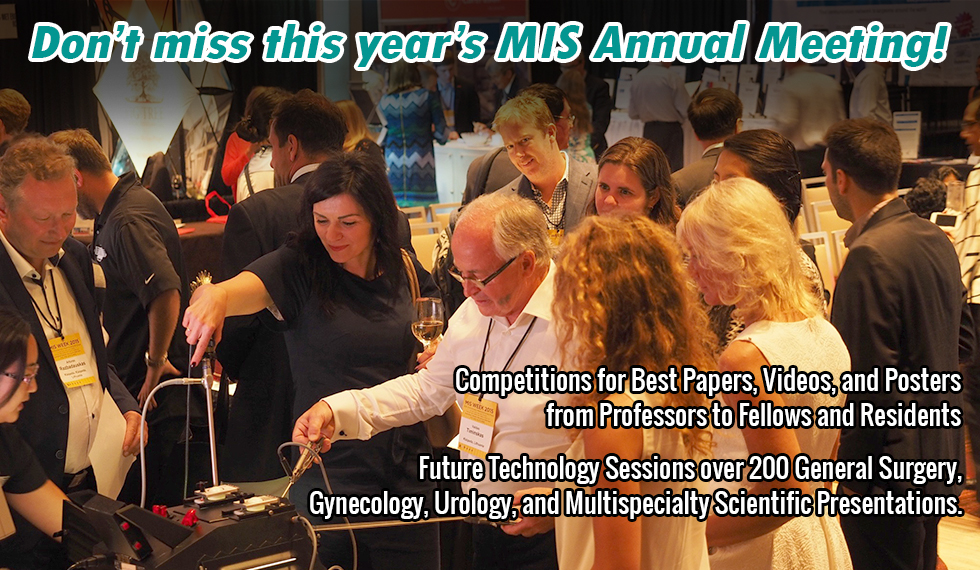

MISWeek is the top multidisciplinary MIS conference in the world. No other society offers cross pollination and learning from other specialties.

Gustavo Stringel -

The great success of JSLS is due to the high quality of articles and our unique model, being both a non-profit publisher and society.

Paul Wetter | SLS Chairman Emeritus -

SLS provides a collegial and open forum atmosphere for interdisciplinary discussion and dissemination of new and established ideas, techniques and therapies.

Raymond J. Lanzafame | SLS Executive Director -

[SLS has], quite honestly, the most impressive information available to surgeons today.

Richard M. Satava | SLS Board Member

Main Menu

ABOUT SLS

SLS is a multispecialty and multidisciplinary Society of general surgeons, gynecologists, urologists, pediatric, thoracic surgeons and others interested in laparoscopic, endoscopic, robotic, and minimally invasive surgical techniques and technologies. SLS endeavors to improve patient care …

Educational Resources

GLOBAL ROBOTIC ASSISTED SURGERY PODCAST – in 2023, GRASP is for surgeons and other surgical professionals to get to grips with the evolution of surgery. Learn from leading surgeons, and other guests, who will be …

MISWeek 2024

MISWeek – The Future of MIS. Minimally Invasive Surgery Week / SLS Annual Meeting is a multispecialty conference of a number of MIS Societies, helps increase knowledge of laparoscopic, robotic, and minimally invasive surgical techniques.

Membership

SLS provides an open multi-specialty forum for surgeons and other health professionals interested in minimally invasive surgery and therapy. Contributing to the growing knowledge of the diagnostic and therapeutic uses of laparoendoscopic and other minimally invasive techniques.

Online CME Credits

Access Medical Education from Professionals Now!

Up-to-date CME material

From medical professionals like you

Access on desktop and mobile devices

SLS Fellowship Programs

SLS is committed to providing educational opportunities to Residents and Fellows in Training who are interested in pursuing a career in minimally invasive surgery or surgical simulation.

SLS MIS Today

Issues and answers specific to surgeons and what we’re facing now, reimbursement, hiring ,legal issues and money, beating stress, solving staffing issues, Improving patient communication and much more.

SLS Career Center

Help With Your Career

New jobs less than 7 days old are available only to Society of Laparoscopic & Robotic Surgeons members. After that period, these jobs become available to everyone.

Virtual Exhibit Hall

The SLS Virtual Exhibit Hall connects physicians and professionals with manufacturers as they search for the most advanced surgical instruments.

Journal of the Society of Laparoscopic & Robotic Surgeons

Journal of the Society of Laparoscopic & Robotic Surgeons, The Most Read MIS Journal, is the multispecialty, peer-reviewed journal of the Society of Laparoscopic & Robotic Surgeons. JSLS seeks to advance minimally invasive surgery by promoting the cross-specialty …

Upcoming Events

Upcoming Conferences and Events.

Nezhat’s History of Endoscopy

In 2005 pioneering surgeon Dr. Camran Nezhat was awarded a fellowship by The American College of Obstetrics and Gynecology to research and write this important history. In Washington, DC at ACOG and the Library of …

Special Programs

SLS offers special programs for Residents, Fellows-in-Training, Nurses, Affiliated Medical Personnel, and Retired Surgeons

SLS Special Interest Groups (SIG)

SLS SIGs support the organization’s educational mission. Members of the SIGs present the popular laparoscopy updates at SLS’s Annual Meeting, taking an important leadership role in preparing physicians for the future.

CRSLS

CRSLS, MIS Case Reports from SLS, is dedicated to the publication of Case Reports in the field of minimally invasive surgery. Case Reports are submitted through the JSLS submission system and undergo the same rigorous peer review process.

Fellowship in Advanced Minimally Invasive & Endoscopic Techniques

The Division of General Surgery at Royal Columbian and Eagle Ridge Hospitals is offering a 1-year SLS Fellowship in Advanced Minimally Invasive and Endoscopic Techniques in General and Colorectal Surgery under the leadership of Dr. …

Enhanced General Surgery Fellowship

Florida Surgical Specialists Enhanced General Surgery Fellowship: Advanced Laparoscopic and Robotic Foregut, Hernia and Colorectal Fellowship

Fellowship in Minimally Invasive & Robotic Gynecologic Surgery (FMIGS)

The Marchand Institute for Minimally Invasive Surgery and Steward Health Mountain Vista Medical Center Affiliated Fellowship Program in Minimally Invasive and Robotic Gynecologic Surgery

Fellowship in Specialized Minimally Invasive Surgery

SLS presents an EXCLUSIVE one year post Graduate fellowship. This fellowship will consist of a one year post Graduate training in benign gynecological disorders such as endometriosis and myomas of the uterus under the direction …

Minimally Invasive Gynecologic Surgery Fellowship (FMIGS) of South Florida

FMIGS of South Florida aims to provide comprehensive advanced training in minimally invasive surgery for graduates of SLS approved OB/GYN Residencies while fostering an environment of innovation, advancement, and research in the field of Minimally …

Fellowship in Specialized Minimally Invasive Surgery

SLS presents an EXCLUSIVE one year post Graduate fellowship. This fellowship will consist of a one year post Graduate training in benign gynecological disorders such as endometriosis and myomas of the uterus under the direction …

Fellowship in Specialized Minimally Invasive Surgery

The Department of Obstetrics and Gynecology at NYU Winthrop Hospital, Mineola, NY, is offering a 1-year or 2-year minimally invasive surgery fellowship program under the directorship of Dr. Farr Nezhat. Applications are currently being accepted …

Surgical Simulation Fellowship

The Society of Laparoscopic & Robotic Surgeons has partnered with the University of Washington to promote, screen, and advocate for quality candidates to ensure the rapid spread of minimally invasive surgery worldwide, through a focus …

Top Gun Advanced Laparoscopic Skills and Suturing Program

Become a top gun surgeon by participating in the Top Gun Laparoscopic Skills & Suturing Program. Top Gun is an unbelievable program that decreases the time that it takes to learn how to intracorporeally suture …

Directory of SLS Members

To find a doctor who is up to date on the latest information about minimally invasive surgery, visit the Society of Laparoscopic & Robotic Surgeons Members page to search for a surgeon in your area.

ORReady

ORReady is a worldwide, multi-Specialty initiative to encourage steps that improve surgical outcomes and save lives. Working together worldwide, Medical Societies and Other Organizations are sharing ideas that work to help improve Outcomes for Surgical Patients.

SLS Scholarly Search

The “one-click” scholarly search is here! Search through SLS’s open access journal, textbooks, various conference syllabi, and much more. Look up the hottest topics or search JSLS Online for articles pertaining to your research.

SLS TV

This site contains Educational Videos which are open access and available worldwide in multiple formats.

Prevention & Management of Laparoendoscopic Surgical Complications

Prevention and Management of Laparoendoscopic Surgical Complications, Third Edition (PM3) is a medical textbook that covers Laparoscopy, Endoscopy, and Minimally Invasive Surgery and other advances that help patients recover quickly and safely.

-

MISWeek is the top multidisciplinary MIS conference in the world. No other society offers cross pollination and learning from other specialties.

Gustavo Stringel -

The great success of JSLS is due to the high quality of articles and our unique model, being both a non-profit publisher and society.

Paul Wetter | SLS Chairman Emeritus -

SLS provides a collegial and open forum atmosphere for interdisciplinary discussion and dissemination of new and established ideas, techniques and therapies.

Raymond J. Lanzafame | SLS Executive Director -

[SLS has], quite honestly, the most impressive information available to surgeons today.

Richard M. Satava | SLS Board Member